|

UPDATE events SET generated_at = CAST(generated_at_raw as timestamp) įinally, if you forsee no more imports to this table, the raw VARCHAR timestamp columns may be removed. UPDATE events SET received_at = CAST(received_at_raw as timestamp) ĪLTER TABLE events ADD COLUMN generated_at TIMESTAMP DEFAULT NULL After import, add new TIMESTAMP columns and use the CAST() function to populate them: ALTER TABLE events ADD COLUMN received_at TIMESTAMP DEFAULT NULL The generated_at and received_at timestamps were imported as VARCHAR because Redshift cannot recognize ISO 8601 timestamps. To see load errors, run: SELECT * from stl_load_errors Optimizations Change timestamps To confirm that events were loaded, run: SELECT COUNT(*) FROM events Consider instructing Redshift to replace them with an underscore by adding this to the COPY: ACCEPTINVCHARS AS '_'Īlternatively, consider escaping them with: ESCAPE ACCEPTINVCHARS Confirm load With ACCEPTINVCHARS, Redshift will replace unrecognized UTF-8 characters with ?. Use the ACCEPTINVCHARS option ( here) to tell Redshift how to handle these un-importable characters. You may receive load errors due to UTF-8 characters, such as: String contains invalid or unsupported UTF8 codepoints.

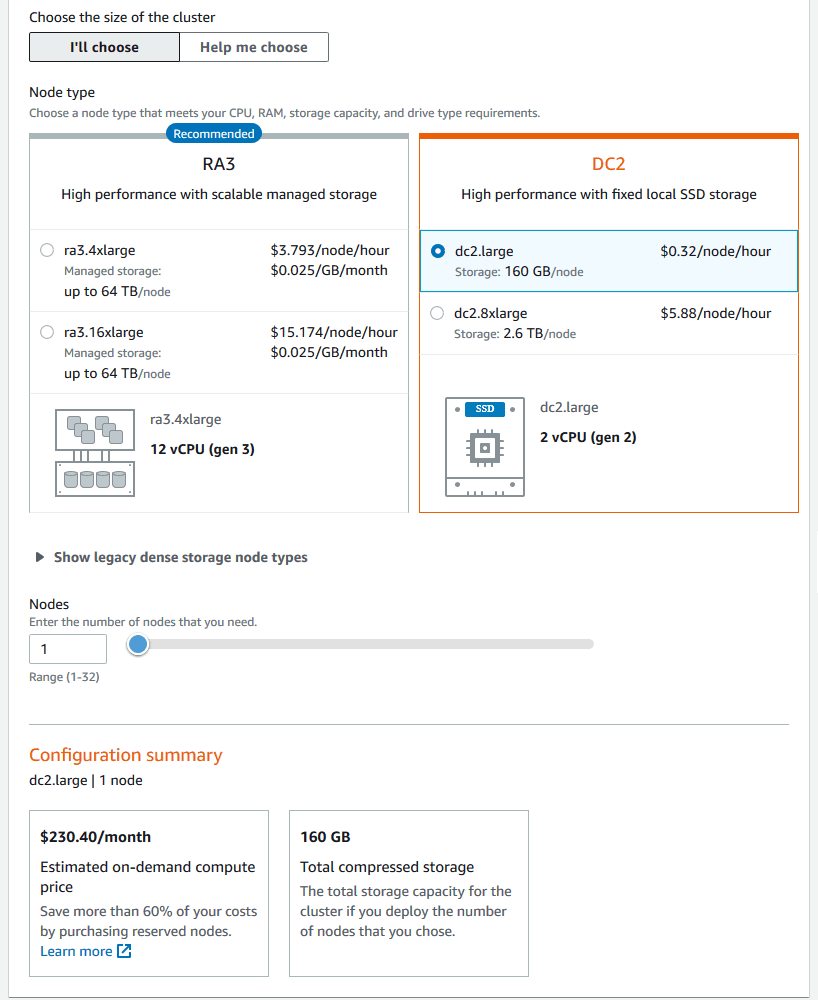

To specify a bucket in a different region, see the REGION operator. Redshift assumes that the S3 bucket is in the same region. For example: copy events from 's3://mylogbucket/papertrail/logs/dt=/.tsv.gz' Load errors Replace /papertrail/logs with the path on Archives.ĭata from only a specific day or days may be loaded by running the above command with specific paths instead of the key root. Load data from log archives: copy events from 's3:///'Ĭredentials 'aws_access_key_id= aws_secret_access_key='įor example: copy events from 's3://mylogbucket/papertrail/logs'Ĭredentials 'aws_access_key_id=abc123 aws_secret_access_key=def456ghi789' Redshift does not recognize ISO 8601 timestamps at import ( more), so timestamps are initially imported as VARCHAR and then converted to TIMESTAMP columns (below). Severity VARCHAR(9) NOT NULL ENCODE Text255, Source_ip VARCHAR(15) NOT NULL ENCODE Text32k,įacility VARCHAR(8) NOT NULL ENCODE Text255, To create the table, paste the following into psql: CREATE TABLE events ( We recommend using the id as the sort key and evenly distributing rows across nodes with DISTSTYLE even. A single-node cluster with default settings works fine. Start by launching a Redshift cluster and following the getting started guide to bring it online. To use Amazon Elastic MapReduce (EMR) or for a step-by-step walkthrough of setting up analytics services on AWS, see Log analytics with Hadoop and Hive. Papertrail log archives can be loaded into Amazon Redshift for ad-hoc relational queries against years of logs, such as: SELECT * FROM events WHERE message LIKE '%error%' Unix and BSD text log files (remote_syslog2)

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed